DICONDE stands for ‘Digital Imaging and Communication in Non-Destructive Evaluation’ and DICONDE was developed by the ASTM International. The ASTM International discovered very early the need for a standard format in NDT (Non-Destructive Testing) to display, store, transfer and inspect digital RT (x-ray) images in a uniform standard. The development goes back to medical, where doctors and hospitals have the same need and the data format here is DICOM (Digital Imaging and Communication in Medicine’.

DICONDE is an open (non-proprietary) data format which allows the users working with different DICONDE compliant systems and share those data with others and if the receiver has a DICONDE compliant software it’s possible to open and review the received images. There is no specific proprietary solution needed.

More important is the DICONDE structure. In the so named DICONDE tags the meat data of an image will be saved in a clear and uniform structure and all relevant image information will also be shared automatically. This makes the archiving process easier and more secure.

If you have more questions or want to get more detailed info about DICONDE contact us.

ADR stands for ‘Automatic Defect Recognition’ which helps the NDT inspector in his daily work to save time and also improve the quality of the inspection.

But how does it work? ADR is based on Artificial Intelligence (AI)

“AI leverages computers and machines to mimic the problem-solving and decision-making capabilities of the human mind.”

The Editors of Encyclopaedia Britannica describing AI as the ability of a digital computer or computer-controlled robot to perform tasks commonly associated with intelligent beings. The term is frequently applied to the

project of developing systems endowed with the intellectual processes characteristic of humans, such as the ability to reason, discover meaning, generalize, or learn from past experience. Since the development of the digital computer in the 1940s, it has been demonstrated that computers can be programmed to carry out very complex tasks—as, for example, discovering proofs for mathematical theorems or playing chess—with great proficiency. Still, despite continuing advances in computer processing speed and memory capacity, there are as yet no programs that can match human flexibility over wider domains or in tasks requiring much everyday knowledge. On the other hand, some programs have attained the performance levels of human experts and professionals in performing certain specific tasks, so that artificial intelligence in this limited sense is found in applications as diverse as medical diagnosis, computer search engines, voice or handwriting recognition and now also for NDT inspections.

AI is based on Machine Learning and Deep Learning. Both acronyms are mainly seen as equal, but this isn’t true. Deep learning and Machine Learning are sub-fields of artificial intelligence, and deep learning is actually a sub-field of machine learning.

IBM has on his website (www.ibm.com) a nice explanation about the differences, which you can see as follows:

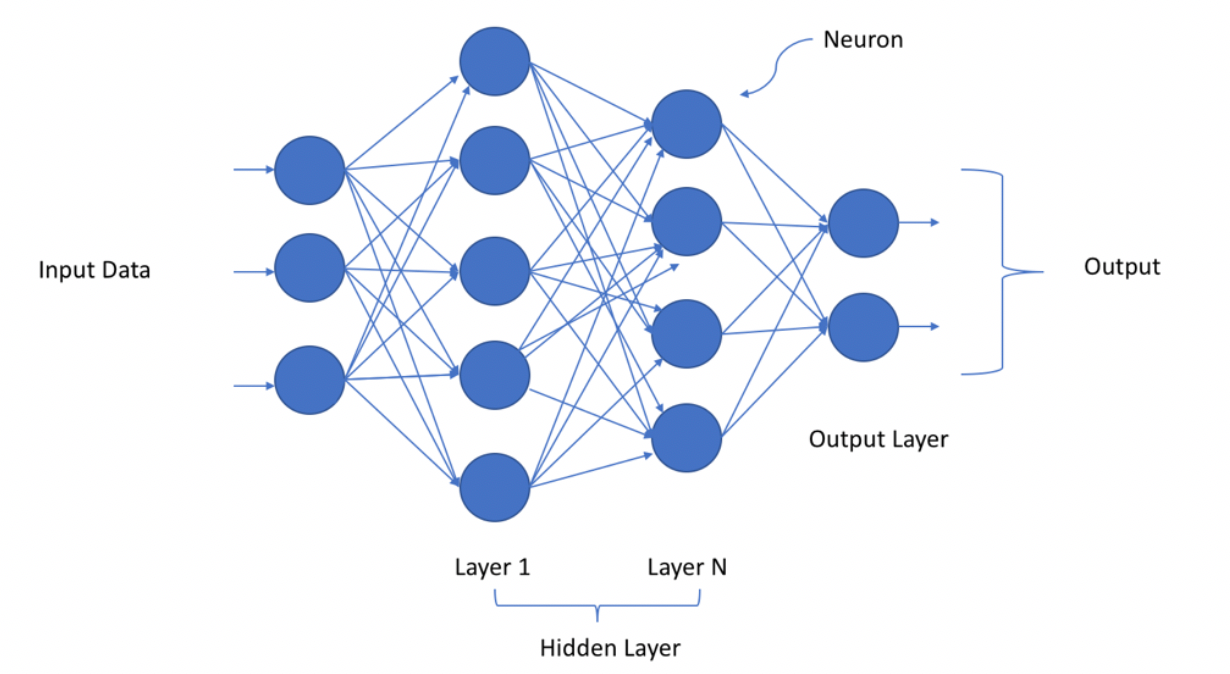

Deep learning is actually comprised of neural networks. “Deep” in deep learning refers to a neural network comprised of more than three layers—which would be inclusive of the inputs and the output—can be considered a deep learning algorithm. This is generally represented using the following diagram:

The way in which deep learning and machine learning differ is in how each algorithm learns. Deep learning automates much of the feature extraction piece of the process, eliminating some of the manual human intervention required and enabling the use of larger data sets. You can think of deep learning as “scalable machine learning” as Lex Fridman noted in same MIT lecture from above. Classical, or “non-deep”, machine learning is more dependent on human intervention to learn. Human experts determine the hierarchy of features to understand the differences between data inputs, usually requiring more structured data to learn.

“Deep” machine learning can leverage labeled datasets, also known as supervised learning, to inform its algorithm, but it doesn’t necessarily require a labeled dataset. It can ingest unstructured data in its raw form (e.g. text, images), and it can automatically determine the hierarchy of features which distinguish different categories of data from one another. Unlike machine learning, it doesn’t require human intervention to process data, allowing us to scale machine learning in more interesting ways.

Back to ADR (Automatic Defect Recognition) is a software tool, which is based on deep and machine learning, where the tool is learning to define and characterize anomalies in f.e. a weld. This will be displayed on the RT-monitor and helps the NDT inspector to improve his task by additional saving time. Due to the learning processes and different algorithms which are used to train the ADR-Tool it is a rational and learning system which becomes more and more accuracy with more tasks.

We from PACSESS see the Automatic Defect Recognition Tool as an assisting system and not as decision making system.

The decision if a weld is prepared correctly or not will be assisted by the ADR software, but the decision will be made by the inspector.

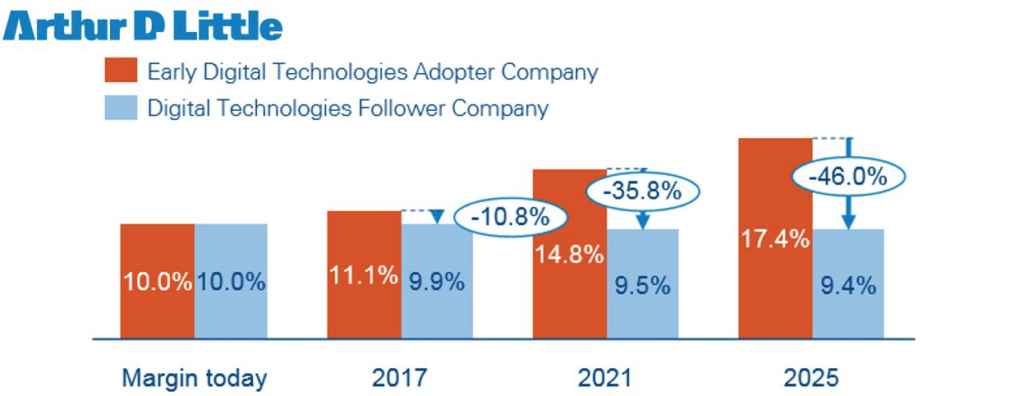

According to an extensive market research done by Arthur D. Little, Industry 4.0 and the related new technologies, such as the “Internet of Things”, “cyber-physical systems” and “additive manufacturing”, will drive radical performance improvements in terms of cost and customer excitement. CxOs in all industries are currently defining their ways to explore and exploit the benefits. The bad news is that the variety of technologies and limited number of industrialized examples make it hard to understand the complexity of the topic. The good message is that this is far more than buzzwords. The new technologies have actual game-changing potential. Savings of between 15 and 50 percent per cost line can be achieved on the operations side. Leaders need to act now. The challenge is to define a powerful operations concept that is forward looking and ensures measurable short-term benefits.

We at PACSESS, have seen customer who have boosted their productivity up to 30% by using our Enterprise platform, simply by reducing their traditional and time consuming file handling times to virtually zero.

According to Varex Imaging, one of the market leaders in Medical Digital Radiology and one of our partners. These are the main differences:

Computed radiography (CR) cassettes use photo-stimulated luminescence screens to capture the X-ray image, instead of traditional X-ray film. The CR cassette goes into a reader to convert the data into a digital image. Digital radiography (DR) systems use active matrix flat panels consisting of a detection layer deposited over an active matrix array of thin film transistors and photodiodes. With DR the image is converted to digital data in real-time and is available for review within seconds.

While both CR and DR have a wider dose range and can be post processed to eliminate mistakes and avoid repeat examinations, DR has some significant advantages over CR. DR improves workflow by producing higher quality images instantaneously while providing two to three times more dose efficiency than CR.

The good and bad of CR is that it enables digital imaging with the traditional workflow of X-ray film. With CR, like film, no synchronization to the generator is required, which had been a requirement for DR imaging. However, recent advances in DR panels are improving their flexibility, portability, and affordability.

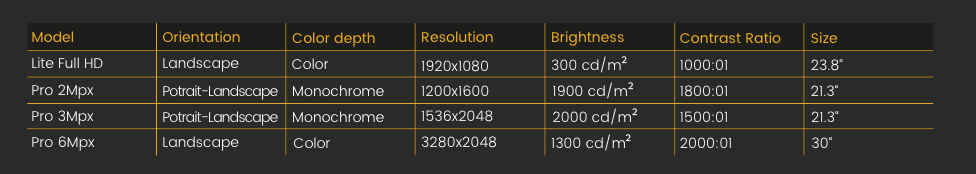

It is highly recommended. Modern office monitors provide an high definition nowadays. However in digital radiography, the most important paramethers for a perfect visualization of digital x-ray images are brightness and contrast ratio. Find below a simple comparison between our radiographic monitors and a 4K standard office display, so you will immediately realise!

Our software offers a permanet stand-alone viewer when you are exporting your images into DICONDE files.